AI Memory Revolution: How EverMemOS Gives Machines a True "Soul

Why AI Can Think But Not Remember

Ever wondered why modern AI can write brilliant articles and solve complex math problems yet can't remember what you told it last week?

This isn’t a minor inconvenience — it’s a fundamental flaw in current AI systems.

The Problem of "Daily Amnesia"

Imagine a personal assistant who wakes up each morning with no memory of previous days. Every conversation starts from scratch.

That’s the reality of large language models today — like gifted geniuses with severe amnesia:

- Each dialogue is a blank slate

- No accumulated experience

- No personal understanding

- No ongoing growth or evolution

---

State of the Industry

Many companies have tried solutions — from simple chat history storage to complex RAG systems — but these are temporary fixes, not fundamental cures.

Recently, I found a promising development:

A team from Shanda Group called EverMind launched EverMemOS — a long-term memory operating system for AI agents.

Record-Breaking Performance

EverMemOS has impressive benchmark results:

- 92.3% in LoCoMo (leading long-term memory benchmark)

- 82% in LongMemEval-S

These results exceed previous best scores and address real-world limitations, opening the door for AI to evolve from a tool into a true intelligent agent.

---

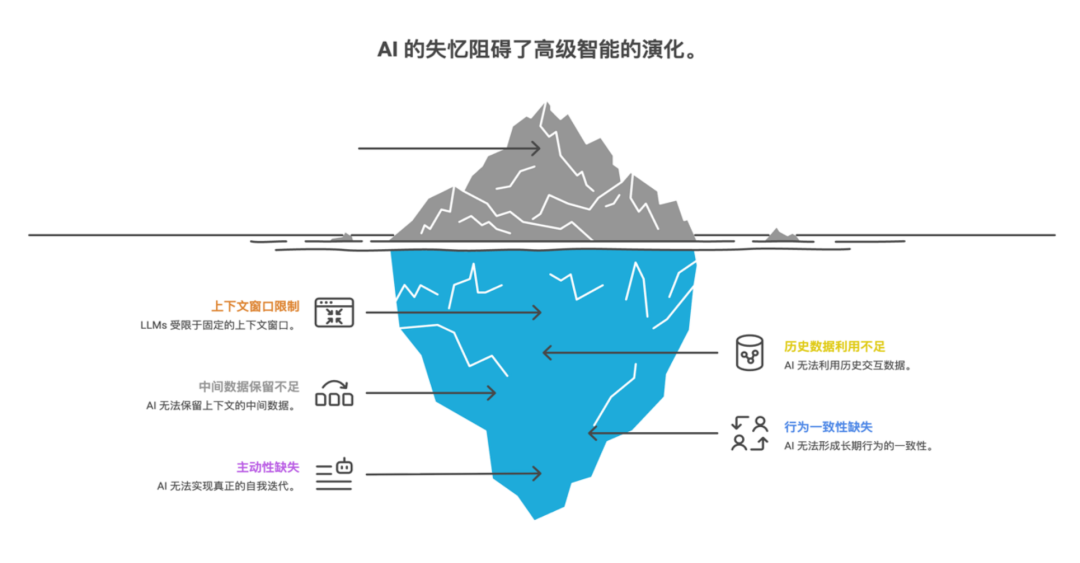

Why Memory Is AI’s Bottleneck

Tool vs. Agent

The line separating an intelligent tool from a true intelligent agent is memory.

Without memory:

- No behavioral consistency

- No proactivity

- No self-improvement

- It's like a person waking up each day forgetting yesterday — impossible to build relationships, personality, or experience.

---

The Context Window Trap

Current LLMs are confined to fixed context windows:

- Once conversation exceeds this limit, earlier info is forgotten

- Leads to fragmented context and contradictions

- User frustration when AI forgets background details mid-discussion

---

Critical Capabilities Limited by Poor Memory

- Personalization: Can't retain preferences, habits, past interactions

- Consistency: Prone to contradictions and erratic advice

- Proactivity: Needs memory of long-term goals and progress tracking

---

Industry Shift Toward Memory

Platforms like Claude and ChatGPT are now integrating long-term memory.

In 1–2 years, AI apps without persistent memory risk seeming obsolete — much like software without internet access today.

---

Persistent Systems & Unified Workflows

For creators handling multi-platform projects, AiToEarn官网 offers:

- Open-source global AI content monetization

- AI creation + publishing to Douyin, Kwai, WeChat, Bilibili, Facebook, Instagram, LinkedIn, YouTube, Pinterest, X, etc.

- Analytics + AI model ranking (AI模型排名)

Though not solely a memory solution, AiToEarn shows how persistent workflows maximize AI's long-term utility.

---

Why Existing Solutions Fall Short

Common gaps in current memory systems:

- Narrow focus (only works for one-on-one chat, not team collaboration)

- Difficulty balancing accuracy, speed, usability, adaptability

- Slow retrieval vs. poor accuracy trade-offs

- Complex deployment processes

---

From Human Brain to AI Innovation

EverMind drew inspiration from the human brain’s memory mechanisms:

- Encoding sensory signals

- Hippocampal indexing

- Cortex-based long-term storage

- Prefrontal–hippocampus collaboration for recall

Chen Tianqiao's "Temporal Structure" Insight

Modern AI models work on a spatial paradigm — static snapshots of data.

The human brain works on a temporal paradigm — continuous, dynamic, predicting and recalling across time.

EverMemOS aims to bridge this gap — giving AI temporal continuity so it can:

- Remember past

- Adapt in present

- Predict the future

- This transforms AI from a cold algorithm into an agent with an evolving identity.

---

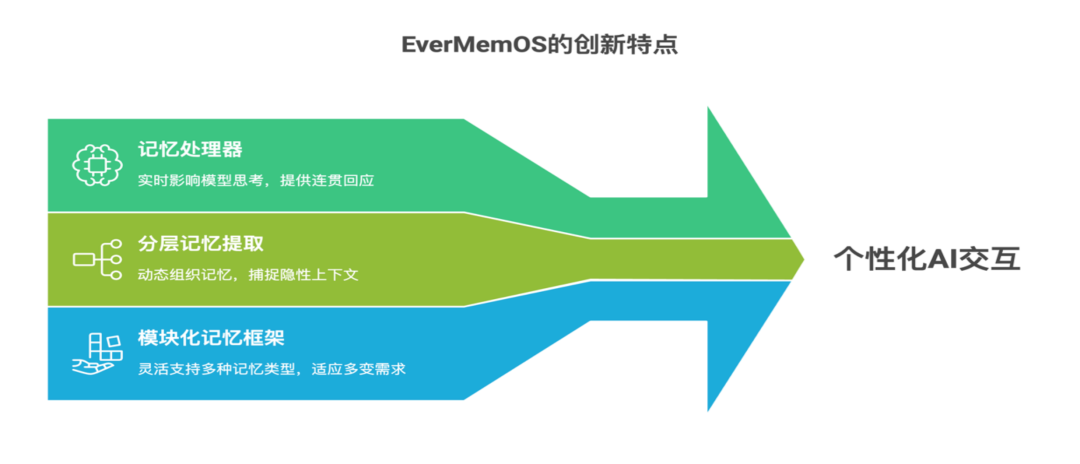

Core Innovations of EverMemOS

1. Memory Processor Paradigm

Moves beyond storage to active application:

- Dynamically integrates past experience into current reasoning

- Mirrors human recall influencing decision-making

---

2. Layered Memory Extraction

Simulates how humans:

- Group related memories

- Identify causal links

- Separate important details from trivia

- Improves accuracy & context relevance over flat text-similarity searches.

---

3. Scalable Modular Memory Framework

Supports multiple memory types:

- Scenario memory

- User profiles

- Preference logs

- Emotional and factual datasets

- Auto-selects optimal memory strategy for each scenario.

---

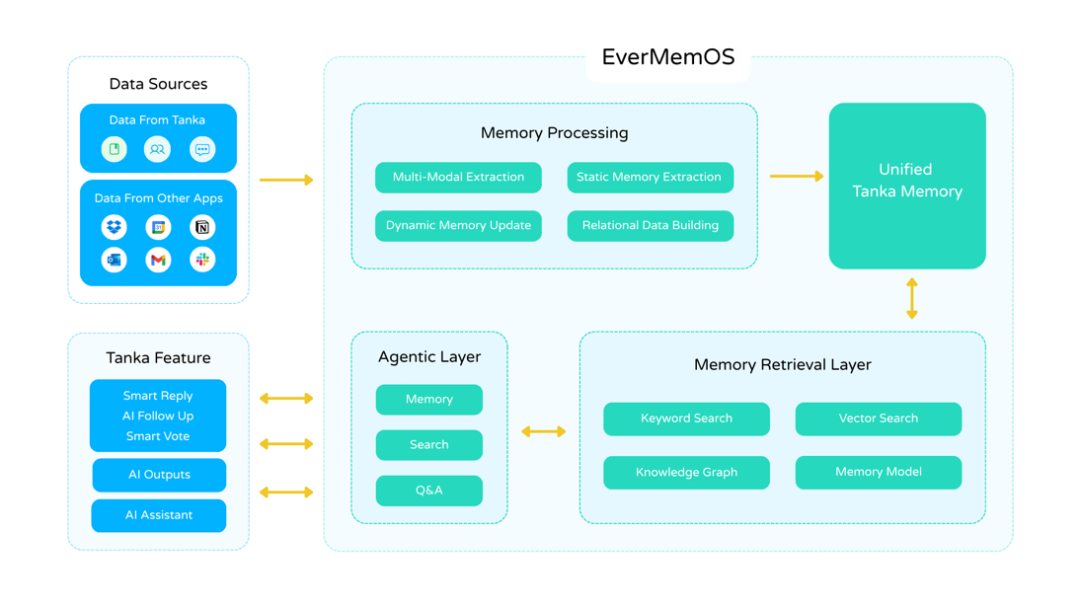

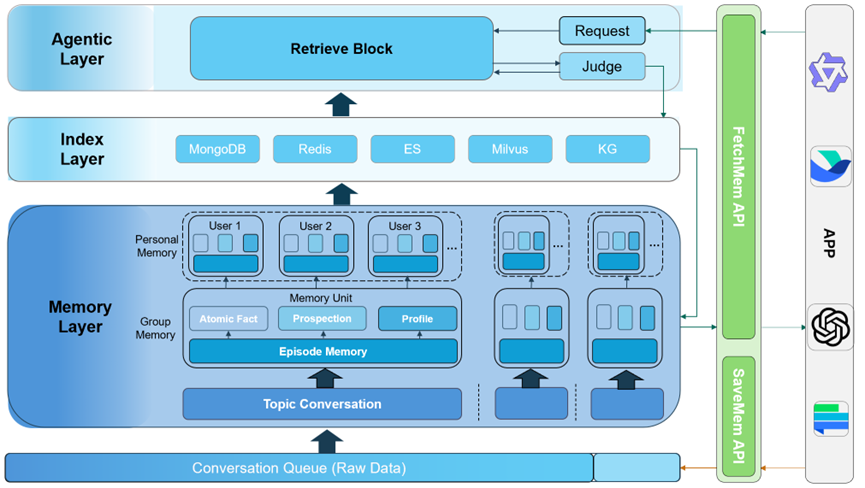

The Four-Layer Architecture

- Agent Layer – task understanding, decision-making (like prefrontal cortex)

- Memory Layer – structured long-term storage (like cerebral cortex)

- Index Layer – multimodal retrieval (like hippocampus)

- Interface Layer – API/MCP integrations (like sensory organs)

This forms a closed cognitive loop:

- Input via Interface Layer

- Locate via Index Layer

- Retrieve via Memory Layer

- Reason via Agent Layer

---

Memory Intelligence In Action

Memory Perception Layer powers:

- Hybrid Retrieval: semantic + keyword + RRF fusion

- Intelligent Re-ranking: prioritizes critical info

- Agentic Retrieval Mode: multi-turn recall for complex queries

- Fast Mode: speed optimization for latency-sensitive tasks

- Reasoning Fusion: integrates episodic, preference, and profile memories

---

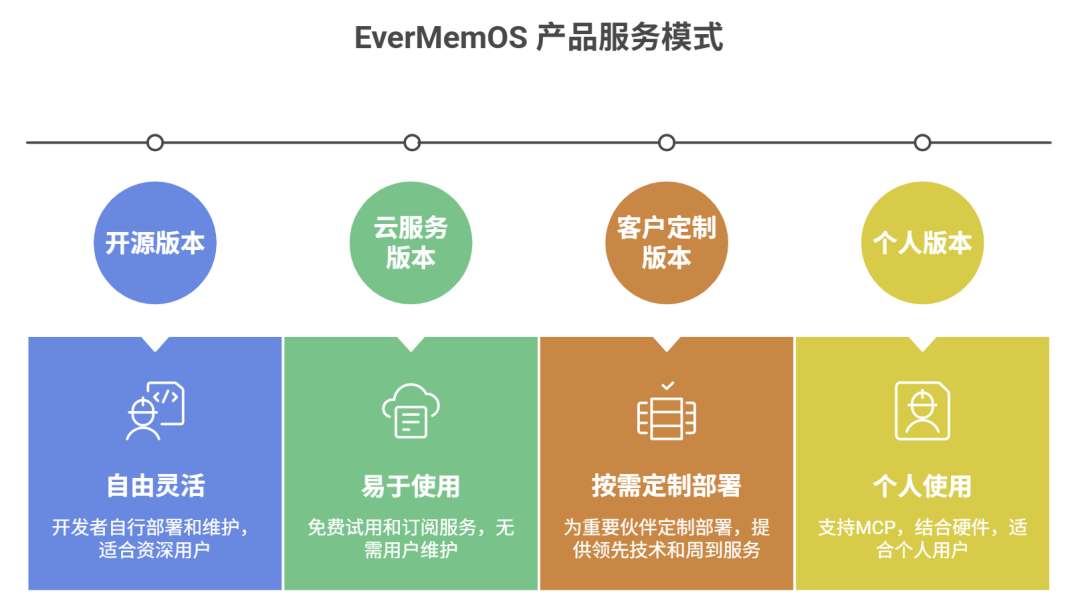

Open-Source Strategy & Ecosystem

EverMemOS is fully open-sourced: https://github.com/EverMind-AI/EverMemOS/

Benefits:

- Builds developer trust (transparent architecture)

- Rapid community-driven iteration

- Path toward standardizing AI memory interfaces

Planned cloud service version for enterprise:

- Professional support

- SLA guarantees

- Scalable performance

---

The Future of AI Memory Systems

Expected evolution:

- Memory as a standard AI component — like databases in software

- AI shifts from tools to collaborative partners

- Persistent personalization — stable AI "personality" and "values"

- Long-term span — months to years context retention

- Emotional and implicit memory capture

- Complex causal reasoning based on stored experience

---

Final Thoughts

Memory isn't just storage — it's the infrastructure for intelligence.

An AI without memory:

- Lives only in the present

- Can't learn from the past or plan the future

EverMemOS represents a philosophical vision — giving AI a continuous identity.

This could someday raise profound ethical questions about rights, consciousness, and AI personhood.

---

References:

Website: http://everm.ai

GitHub: https://github.com/EverMind-AI/EverMemOS/

---

Would you like me to add a side-by-side comparison table showing EverMemOS vs. conventional AI memory approaches? That could make the innovations more tangible for readers.