CodeClash Benchmarks Large Language Models Through Multi-Round Programming Contests

CodeClash: A New Benchmark for Competitive LLM Coding

Researchers from Stanford, Princeton, and Cornell have introduced CodeClash, a novel benchmark designed to more effectively evaluate the coding abilities of large language models (LLMs).

---

Why CodeClash?

Traditional LLM coding benchmarks often focus on narrowly defined tasks—like bug fixing, algorithm implementation, or writing tests.

However, the researchers argue that real-world software development poses broader, high-level objectives:

> Unlike maintenance work, developers often need to improve user retention, increase revenue, or reduce costs — tasks requiring strategic breakdown, prioritization, and solution design.

CodeClash is structured to simulate these goal-oriented, iterative development cycles, capturing how LLMs perform when ambition replaces explicit step-by-step instructions.

---

How CodeClash Works

Competition Format:

- Multiple Rounds:

- LLMs compete across several rounds to build the most effective codebase.

- High-Level Objectives:

- Competitions aim for outcomes like score maximization, resource gathering, and survival.

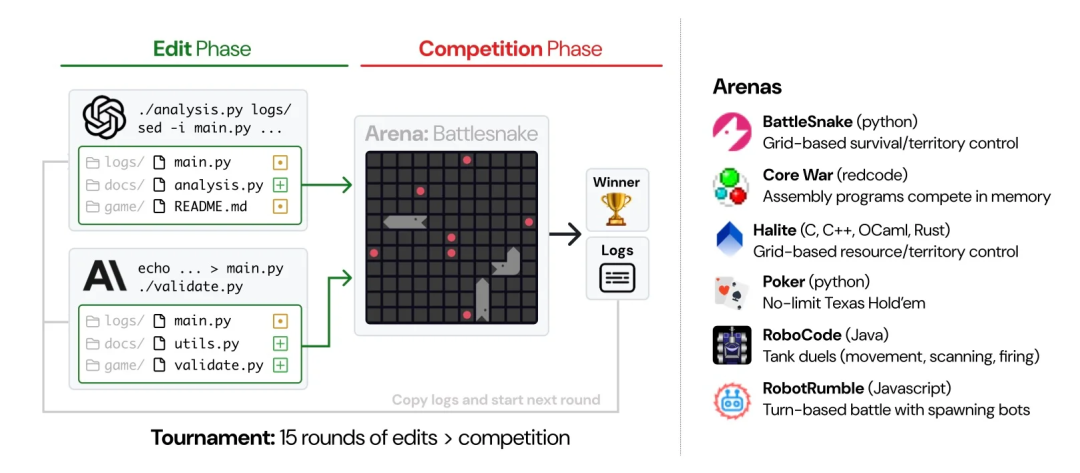

- Battle Arenas:

- The current arenas include:

- BattleSnake (grid-based survival)

- Poker (no-limit Texas hold'em)

- RoboCode (tank battles)

---

Two-Phase Cycle

Each round consists of:

1. Editing Phase

- LLMs edit and enhance their codebase based on the environment and available strategies.

- Initial codebases contain mechanics, sample bots, and suggested tactics, but LLMs must discover how to leverage them effectively.

2. Competition Phase

- Codebases battle in arenas.

- Winners are determined by achieving the set objectives for each arena type.

---

Continuous Learning Between Rounds

- Competition logs are stored in a log library.

- LLMs can study these logs to refine their strategies in subsequent rounds.

---

Research Results

- Scale: 1,680 matches involving 8 LLMs (Claude Sonnet 4.5, GPT 5, Gemini 2.5 Pro, Qwen3-Coder, Grok Code Fast, etc.).

- Performance Insights:

- No single LLM dominated across all arenas.

- Anthropic and OpenAI models held a slight overall edge.

- Multi-agent matches showed greater volatility:

- Six-player games: winners took only 28.6% of total score share.

- One-on-one games: winners secured 78.0%.

- Code Analysis Ability:

- GPT 5 excelled at analyzing competitors’ codebases.

- However, strong analysis skills didn’t guarantee competitive success.

---

Limitations & Future Directions

Researchers acknowledged CodeClash’s smaller scale compared to large real-world systems.

Planned improvements include:

- Handling larger, more complex codebases.

- Supporting multiple simultaneous objectives.

Original article:

https://www.infoq.com/news/2025/11/codeclash-competitive-llm-coding/

---

AiToEarn: Monetizing AI Creativity

As AI tools and benchmarks evolve, platforms enabling integrated AI content generation, deployment, and analytics become more important.

The open-source global monetization platform AiToEarn offers:

- Multi-platform Publishing: Douyin, Kwai, WeChat, Bilibili, Rednote, Facebook, Instagram, LinkedIn, Threads, YouTube, Pinterest, X (Twitter).

- AI Creation & Distribution Integration: End-to-end workflow connecting content creation, publishing, analytics, and model ranking.

- Creator Empowerment: Streamlined monetization across diverse digital ecosystems.

🔗 AI模型排名

---

Related News & Insights

- Valuation Surpasses 200 Billion, Cursor’s New Funding Goes Viral! Founder Reveals Growth & Hiring Secrets

- Baidu Launches World’s First Commercial Self-Evolving Intelligent Agent

- Yann LeCun Resigns from Meta to Start New Venture

- Jeff Barr on AI’s Impact on Developer Ecosystems

Read Original: https://www.infoq.cn/article/ayF7iELxSWyS0CH4eh0e

Open in WeChat: Link Proxy

---

Final Takeaway

In today’s fast-moving AI landscape, the speed of iteration and adaptability emerge as crucial competitive advantages.

For AI creators, developers, and entrepreneurs, tools like AiToEarn present a pathway to:

- Efficiently create AI-generated content.

- Instantly publish across major platforms.

- Track performance and rankings.

- Monetize creativity at scale.

With benchmarks like CodeClash and ecosystems like AiToEarn, the next wave of AI innovation will be not only smarter—but faster, more competitive, and globally connected.