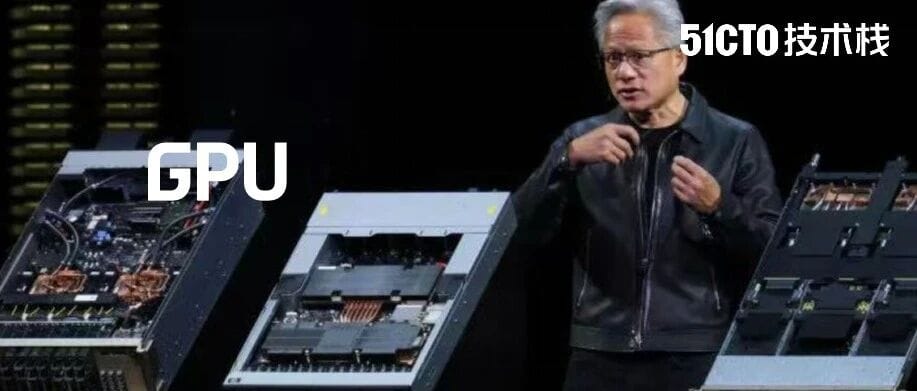

The AI World Is on Edge: How Many Years Can a GPU Last?

How Long Can a Single GPU Last — 2, 5, or Even 6 Years?

Over the past three years, the AI industry has been in overdrive — models grow larger, data centers multiply, and Nvidia’s stock keeps soaring.

Now, as global tech giants prepare to invest $1 trillion in AI data centers over the next five years, one critical question is raising concerns:

> How long can a GPU truly last?

This question is no mere technicality — it has become a high-stakes KPI that can swing stock prices and investor sentiment.

Yet there is no official, standardized answer.

- Google, Oracle, and Microsoft estimate lifespans of up to 6 years.

- Skeptics, such as short seller Michael Burry, argue it’s closer to 2–3 years.

---

Why GPU Lifespan Matters

Hardware lifespan affects depreciation — the accounting method that allocates the cost of an asset over its usable life.

For AI data centers:

- Longer lifespan → better profit margins

- Shorter lifespan → rapid profit decline

Unlike traditional servers (often 5–7 years), GPUs are still an unknown quantity. Purchased in huge volumes only recently, their track record is short, making depreciation guesses risky.

---

AI GPUs: A Depreciation Puzzle

Nvidia’s first data center AI chips debuted in 2018.

The market exploded after ChatGPT’s late-2022 launch, propelling Nvidia’s data center revenue from $15B → $115.2B in five years.

> “Three years, five, or seven?”

> — Haim Zaltzman, Vice Chair, Latham & Watkins

---

The Optimists: “Up to 6 Years” Lifespan

Supporters include: Google, Oracle, Microsoft, CoreWeave

Key points:

- Microsoft cites lifespans between 2–6 years.

- CoreWeave uses a 6-year depreciation cycle, claiming strong secondary market value:

- A100 (2020) units — fully rented out

- H100 (2022) — resale at 95% of original price

Despite this data-backed optimism, market sentiment dropped:

- CoreWeave down 57% from yearly high

- Oracle down 34% since September

---

The Skeptics: “Only 2–3 Years”

Short seller Michael Burry has shorted Nvidia and Palantir, believing tech giants overestimate GPU lifespans — inflating profits.

- Burry’s view: Servers last only 2–3 years

- Amazon, Microsoft declined to comment

- Meta, Google, Oracle have not responded

---

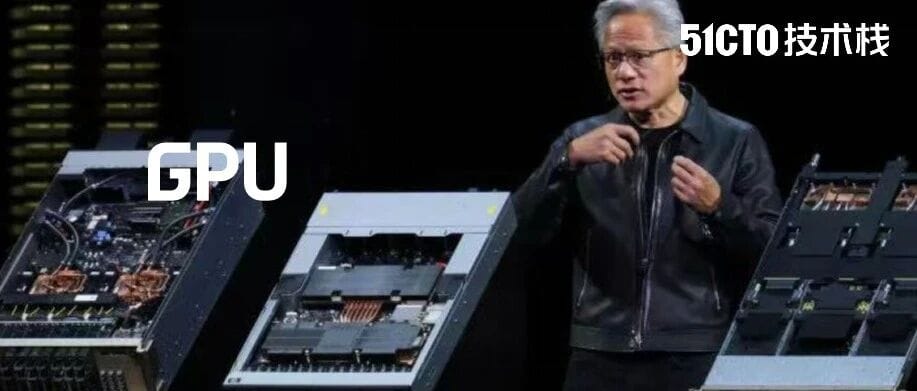

Jensen Huang Warns of Faster Obsolescence

Factors triggering quick depreciation:

- Hardware wear

- Rapid tech upgrades

- Reduced cost-effectiveness

Nvidia CEO Jensen Huang joked that after the Blackwell launch, Hopper GPUs would be nearly worthless.

Nvidia’s product cycle has accelerated from 2 years → 1 year, with AMD following suit.

Amazon has shortened its server lifespan estimate from 6 to 5 years, citing faster AI iteration.

---

Microsoft’s Strategy: Diversify Procurement

Microsoft CEO Satya Nadella doesn’t want to overcommit to one GPU generation.

Key reasoning:

- Nvidia’s rapid upgrade cycle

- Avoid being locked into 4–5-year depreciation

- Value decays faster than physical lifespan due to performance gaps

---

Secondary GPU Market Volatility

In some industries, older GPUs work fine.

In others, the latest architecture is mandatory — prices swing dramatically.

> “They can still run, but they’re not worth running.”

---

Your view: How long can GPUs truly last? Share in the comments.

Reference:

---

Recommended Reading

- Microsoft CEO Nadella’s 50-year AGI plan & AI industry caution

- Why good documentation may replace code reading in the future

---

Creator Insight: AI Lifespan & Monetization

Tools like AiToEarn官网 connect AI content generation, publishing, and analytics across platforms such as Douyin, Bilibili, LinkedIn, X (Twitter), and YouTube — ensuring digital asset lifespan and value retention.

---

A Fully Interpretable GPT-3 — OpenAI’s Breakthrough

OpenAI researchers have for the first time revealed the microscopic mechanisms inside GPT-3, identifying neural network “circuits” and showing:

- Smaller circuits → greater interpretability

- Potential path to fully interpretable GPT-3

---

Core Findings

- Neuron and attention head collaborations form interpretable circuits

- Circuit size matters — smaller is clearer

- Moves closer to transparent & accountable AI

---

Why It Matters

AI’s “black box” problem hinders safety & trust.

Micro-level mapping could:

- Make models more traceable

- Aid debugging & value alignment

- Improve security & compliance

---

Looking Ahead

Future models could be:

- Fully traceable in decision-making

- Economically reliable for content creators

- Adaptable to rapid tech shifts

---

Impact on Content Creation

Platforms like AiToEarn官网 and its open-source ecosystem (GitHub, Model Ranking) could integrate interpretable AI to ensure ethical, high-quality outputs while optimizing monetization across:

- Douyin

- Kwai

- Bilibili

- Xiaohongshu

- Threads

- YouTube

- X (Twitter)

---

Read More: